How Do You Build Your AI Factory to Scale Impact?

Mar 12, 2026

By the end of 2025, most organisations have tried AI. What remains rare is scaling AI into core business processes with measurable outcomes. The Data Masterclass Christmas Edition community call focused on exactly that gap.

The session brought together Michael Gramlich and Alexander Borek (Data Masterclass), Andreas Odenkirchen and Katharina Egler (PwC), Hannah Tschannen (Swiss Post), and Priti Padhy (CEO, NexgAI).

You can watch the full session here.

Across all three episodes, the message was consistent. Pilots are not the problem. Missing foundations are. Scaling AI requires an end-to-end value chain, reusable delivery patterns, and leadership that treats AI as a business capability rather than a technical experiment.

Alexander Borek framed this shift clearly early in the session, “By the end of 2025, the question is not ‘do we do AI or not?’ The question is how we do it so it scales.”

The Core Problem: Activity Without Process Change

Most organisations are active in AI. They run PoCs, demos, chatbots, copilots, and productivity pilots. But this activity often stays outside the processes that drive operational performance and P&L.

To describe this gap, Alexander Borek introduced a metaphor that resonated strongly with the audience. He described organisations as “dancing around the fire.” They stay close enough to show progress, but avoid stepping into the fire itself. Stepping into the fire means redesigning how work is done, not just adding tools on top.

This pattern mirrors earlier waves of Big Data and data science. Use cases were chosen opportunistically. Teams built isolated solutions. End-to-end value chains were rarely engineered.

Michael Gramlich added a concrete failure mode he sees repeatedly in practice. Many AI systems are built without strong user pull or workflow integration. As a result, they never reach sustained adoption.

He points out, “They’re building something cool, but they develop into Nirvana and not into the hands of what users want or need.”

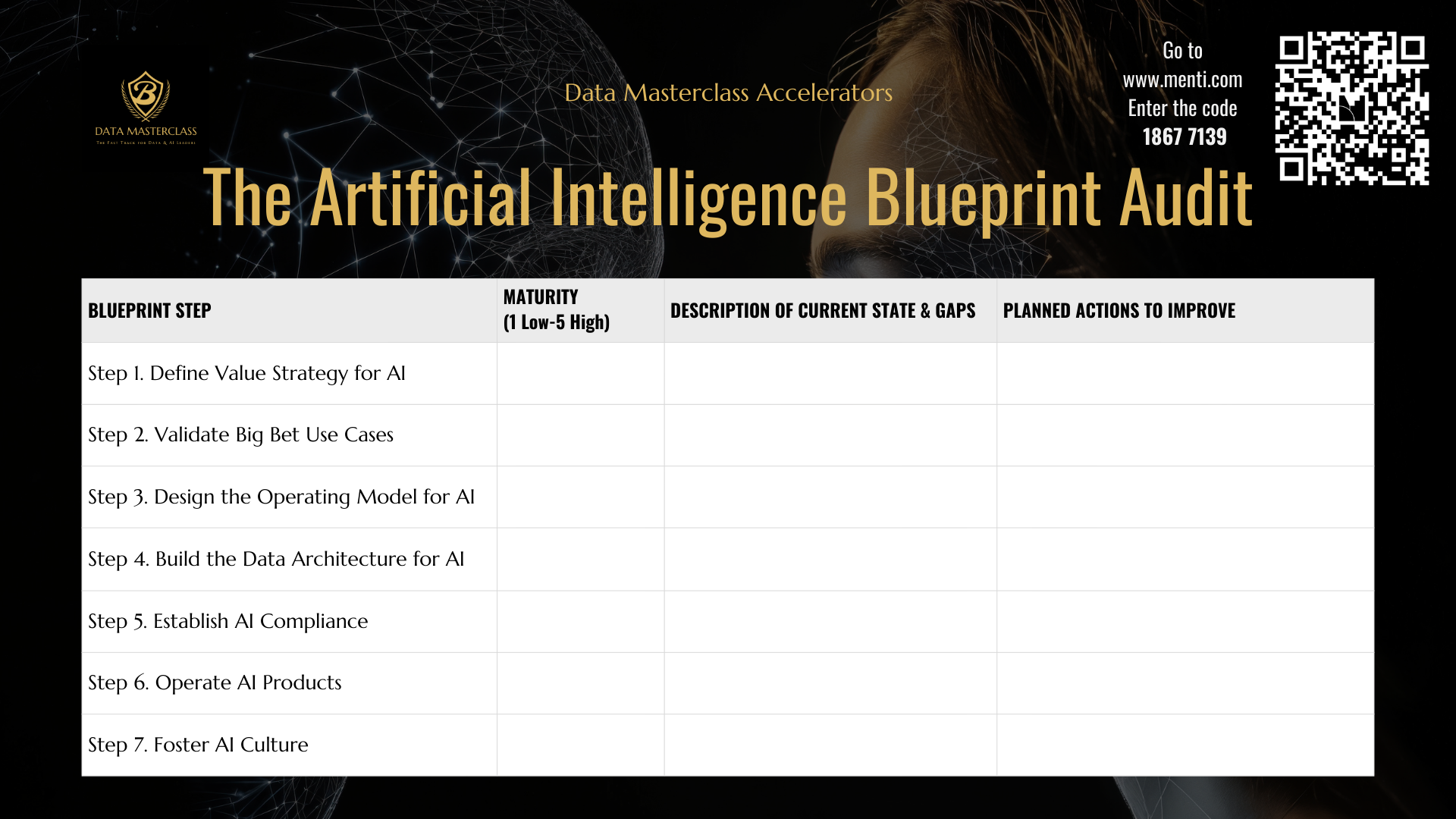

The 7-Step Blueprint for AI at Scale

Episode 1 outlined a seven-part blueprint that treats AI as a full delivery system. The central point was that most organisations focus on one slice, typically the model or prototype, while scale requires all seven working together.

-

AI value strategy and big bets

The discussion stressed moving from “what can we try?” to “where does AI materially change our business?” Borek described leadership workshops that result in a small number of strategic bets tied directly to business outcomes and data needs. Product design and validation. -

Product design and validation

Scaling requires deep process immersion. This includes observing real workflows, co-designing with users, and iterating quickly. A recurring gap discussed was the lack of product management and UX skills in many AI teams. -

Operating model

Ambition stalls without clear roles and ownership. The call repeatedly returned to hub-and-spoke models, with central teams owning strategy, guardrails, platforms, and monitoring, while domain teams own delivery. - Technical and data architecture AI needs context, not just data. Context includes process documentation, metadata, business rules, and enterprise knowledge made accessible through vector databases and knowledge graphs.

- Compliance and EU AI Act readiness

Risk tiering, logging, traceability, documentation, and versioning were discussed as practical requirements that also improve operational quality, not just regulatory compliance. - AI Ops

Once systems reach production, monitoring, observability, model management, runbooks, CI/CD, and evaluation become critical. The group noted that non-deterministic outputs complicate AI operations compared with traditional software. - Culture and adoption

Adoption depends on enablement, champions, incentives, and visible business ownership. It cannot be treated as an afterthought.

Summing this up, Borek emphasised that scale only comes from addressing the whole chain, “We have to look at the end-to-end value chain of AI, not just delivering the AI bit.”

The AI Factory: Reuse as the Scaling Mechanism

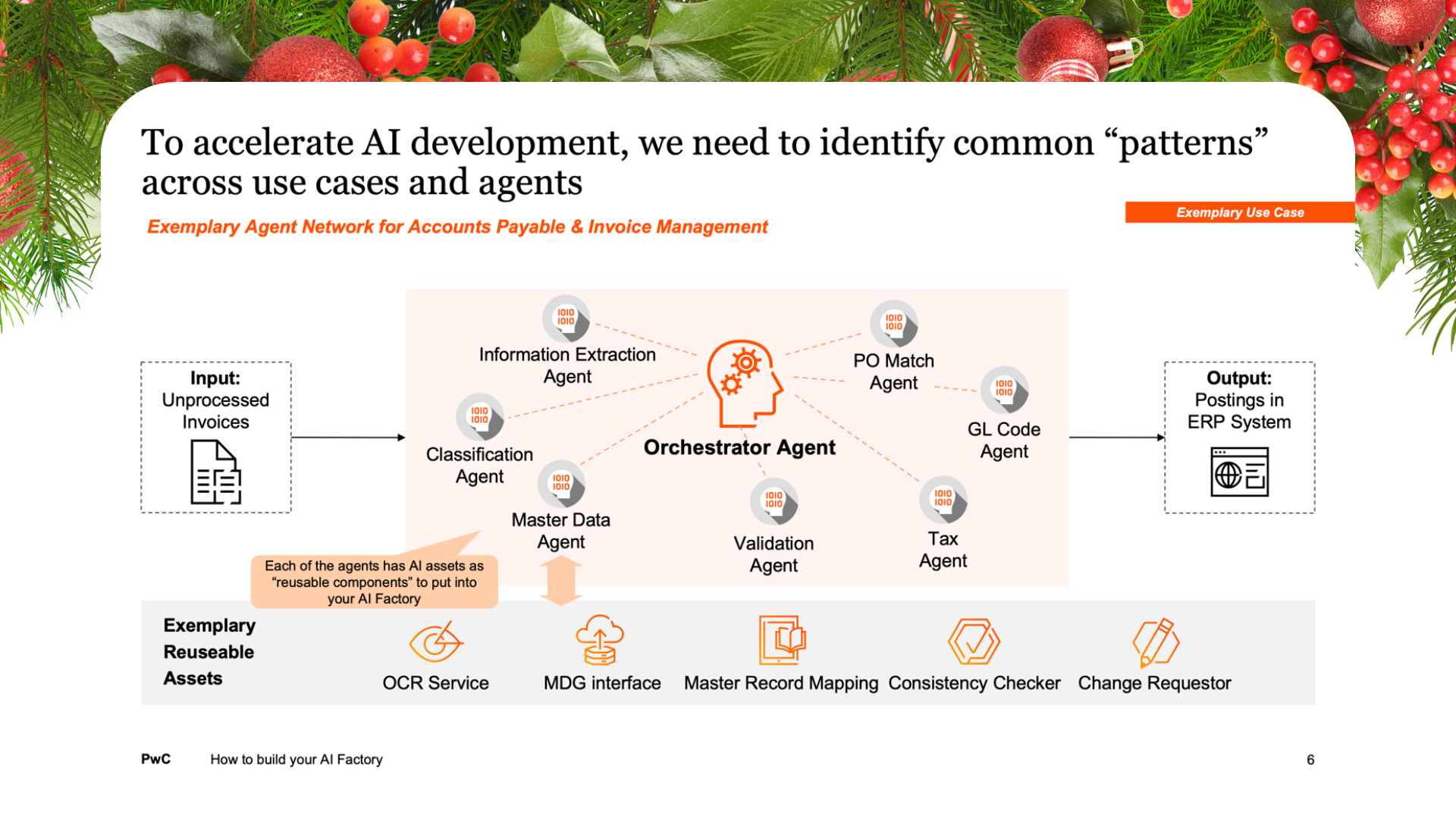

Episodes 2 and 3 shifted from theory to execution. Andreas Odenkirchen and Katharina Egler introduced the AI Factory as a way to stop building one-off solutions and instead create reusable assets and patterns.

Example: invoice processing with agents

Katharina described a multi-agent invoice process covering document classification, extraction, vendor matching, tax codes, GL coding, and orchestration. The key insight was that value sits in the reusable assets behind these agents: ERP and master data interfaces, validation logic, and change request flows.

These assets can be reused across other domains, such as customer or material master data.

Patterns and asset inventories

A concrete example discussed was “chat over documents” in Teams or SharePoint. Many organisations rebuild this pattern repeatedly. The AI Factory approach is to build it once, document it properly, and deploy it at scale.

Assets extend beyond code and include templates, UI patterns, business case formats, datasets, evaluation frameworks, and governance checklists.

Andreas shared what this looks like in practice once reuse starts to compound, “After three to five use cases, we realised we could cut the time in half.”

Katharina reinforced the objective behind this approach, “The main objective is to scale, not reinvent the wheel every time.”

Teams and governance

Delivery happens in cross-functional pods. Some pods focus on business solutions, others on shared assets. Portfolio management at the hub level ensures visibility and reuse. Several speakers also mentioned reserving capacity for innovation and urgent leadership requests.

Swiss Post: Structuring AI at Scale

Swiss Post provided a concrete organisational example. Hannah Tschannen explained that they categorise AI into three groups:

- AI game changers

- Process AI

- Everyday AI, including tools like Copilot

Her key message was operational. Access does not equal value. Tool rollouts must be paired with enablement, communication, champions, and guardrails. Swiss Post actively tracks shadow AI usage and treats it as a KPI.

They also partner closely with HR, particularly for everyday AI, because workforce impact cannot be managed by IT alone.

As Hannah put it succinctly, “Just providing people with the tools is not enough.”

Swiss Post is now setting up an agent factory to support reuse and consistent delivery.

Measuring Value: Operational KPIs Over Vanity Metrics

A recurring challenge raised in the community was value attribution after deployment. Andreas explained why top-line KPIs like revenue or cost are often misleading. AI is usually one influence among many.

The recommendation was to focus on operational KPIs close to the process, such as cycle time, error rates, throughput, and adoption. Baselines and targets must be defined before delivery starts. As Andreas puts it, “Without a baseline, you cannot measure improvement later.”

Priti added a sharper filter when it comes to prioritisation, “You shouldn’t do any program if it is not associated with some sort of currency.”

Leadership and Urgency

Across the discussion, AI was framed as a board-level topic. Priti argued that modern AI capabilities fundamentally change what processes and products can look like, which requires deliberate strategic reflection, not just incremental pilots.

He described the need for leadership teams to step back and explore what is possible before committing to execution, “You’ve got to take everybody and think through what’s the possibility.”

The group also stressed urgency. AI adoption follows an exponential curve. Waiting increases the gap to competitors who redesign faster and operate with lower cost and higher margins.

At the same time, Andreas reminded the audience that transformation still happens in steps. You can accelerate them, but you cannot skip them. Many organisations are standardising while already in flight.

A Practical Starting Point for 2026

The sessions converged on a pragmatic starting sequence:

- Define two to three strategic AI bets

- Pick one pattern and extract reusable assets early

- Set up a lightweight AI Factory with portfolio visibility and one to two pods

- Define operational KPIs and baselines from day one

- Invest in enablement and AI champions, in partnership with HR

What It Takes to Scale AI

Scaling AI is not about running more pilots. It is about building the system that turns pilots into production. That system includes strategy, reuse, governance, measurement, and leadership commitment.

If you want to go deeper into the technical backbone behind AI Factories, join our next live session.

Live Community Call: How to connect MCP Servers and Tools for AI Agents?

Do not miss out on our premium content!

Join our mailing list to receive free premium content and updates from our team.

Don't worry, your information will not be shared.

We hate SPAM. We will never sell your information, for any reason.